What Is Test Marketing and Why It's Non-Negotiable in 2026

Test marketing is the practice of launching a campaign, message, or channel strategy at reduced scale — or in a controlled environment — before committing full budget and resources. It's one of the oldest disciplines in marketing, but in 2026 it carries more weight than ever. The pressure to demonstrate ROI didn't ease after 2025. If anything, executive scrutiny intensified. Industry research from HubSpot, Gartner, and Forrester consistently showed that proving financial impact was the top concern for senior marketers throughout last year — and that tension between spend and measurable outcomes is carrying straight into this year.

The marketers who thrived in 2025 weren't the ones who spent the most or moved the fastest. They were the ones who tested deliberately, measured honestly, and scaled only what the data supported. If you're building a 2026 marketing plan right now, test marketing isn't a nice-to-have — it's the structural foundation that protects every dollar you allocate.

The Attribution Gap That Makes Testing Essential

Attribution became significantly harder to execute in 2025. Third-party cookies continued their phase-out, GDPR and CCPA restricted data use further, and platform data stayed locked inside walled ecosystems like Meta, Google, and LinkedIn. Traditional multi-touch attribution models lost accuracy, forcing teams to rely on partial signals and inferred outcomes. This directly weakened confidence in performance reporting at the board level.

Test marketing sidesteps the attribution problem by design. When you run a controlled experiment — isolating one variable, measuring against a clear baseline — you don't need perfect attribution across every touchpoint. You need a clean before-and-after comparison within a bounded test environment. That's a far more defensible number to bring to leadership than a modeled attribution percentage from a platform with obvious incentives to claim credit.

Auditing 2025 Results Before You Run a Single Test

There's a critical mistake teams make: they start testing new ideas without first understanding why their current approach is performing the way it is. Running experiments without a performance baseline is expensive guesswork. The right foundation for a 2026 test marketing strategy is a rigorous audit of 2025 results.

Phase 1 — Gather the Raw Data

Pull reports from every channel where you ran campaigns in 2025: Google Analytics for organic and paid traffic, your ad platforms for cost-per-click and conversion data, your CRM for pipeline influence and closed revenue, and your email platform for open rates, click rates, and revenue attributed per send. Don't rely on any single platform's attribution. Aggregate from multiple sources and flag where the numbers conflict — those conflicts are often your most interesting findings.

Sort your 2025 campaigns by revenue generated or leads produced. Highlight the top 20% that drove the most measurable value. This isn't just an efficiency exercise — it tells you where the market responded, which is the only accurate signal of where to focus your testing attention in 2026.

Phase 2 — Identify Patterns Worth Replicating (and Anomalies Worth Testing)

Your 2025 data will show seasonal spikes, audience segments that over-performed, and creative formats that consistently beat benchmarks. These are your hypotheses for 2026. If email campaigns targeting re-engagement of dormant subscribers outperformed acquisition campaigns by 40%, that's not a coincidence — it's a signal that your retention messaging is strong and your acquisition messaging needs testing. Document these findings explicitly. Marketers who can articulate "this worked because X" enter 2026 testing cycles with enormous advantages over teams who simply replicate what worked without understanding why.

How to Structure a Marketing Test That Actually Proves Something

Most marketing tests fail not because the idea was wrong, but because the test was designed poorly. A test with no clear hypothesis, no defined success metric, and no minimum duration teaches you nothing — and costs real budget to learn nothing.

Write the Hypothesis First

Before touching your marketing automation platform or launching a campaign variant, write the hypothesis in plain language: "If we change X, we expect to see Y change in Z metric, because of our assumption about audience behavior A." Every word in that sentence matters. "X" forces you to isolate one variable. "Y" forces you to define what success looks like before you see the results — removing confirmation bias. "A" forces you to articulate why you believe the change will have the effect you expect, which is what you'll learn whether you're right or wrong.

Choose the Right Test Type for Your Goal

Not every question requires an A/B split. Understanding which test type fits your objective prevents wasted cycles:

- A/B tests are best for isolating a single variable — subject line, CTA copy, send time, or headline. They require the least traffic to reach significance and produce the clearest learnings.

- Multivariate tests test combinations of variables simultaneously and require significantly higher volume. Use these only when you have enough audience size to produce statistically valid segments across all combinations.

- Holdout tests suppress a campaign from a randomly selected audience segment and compare their behavior to the exposed group. This is the gold standard for proving incrementality — and the approach most capable of surviving executive scrutiny.

- Sequential tests (before-and-after) work for channel-level decisions where splitting an audience isn't practical, but they're vulnerable to external variables like seasonality or news events.

Newsletter

Get the latest SaaS reviews in your inbox

By subscribing, you agree to receive email updates. Unsubscribe any time. Privacy policy.

Define Statistical Significance and Minimum Duration Upfront

The two most common ways to invalidate a test are stopping it too early (when you see a result you like) and running it so long that external conditions contaminate the results. Set your minimum sample size before the test launches using a significance calculator — most require 95% confidence intervals for business-critical decisions. Set a maximum duration: for email tests, 1–2 weeks is typically sufficient; for paid media, 2–4 weeks; for landing page tests, long enough to collect at least 100 conversions per variant.

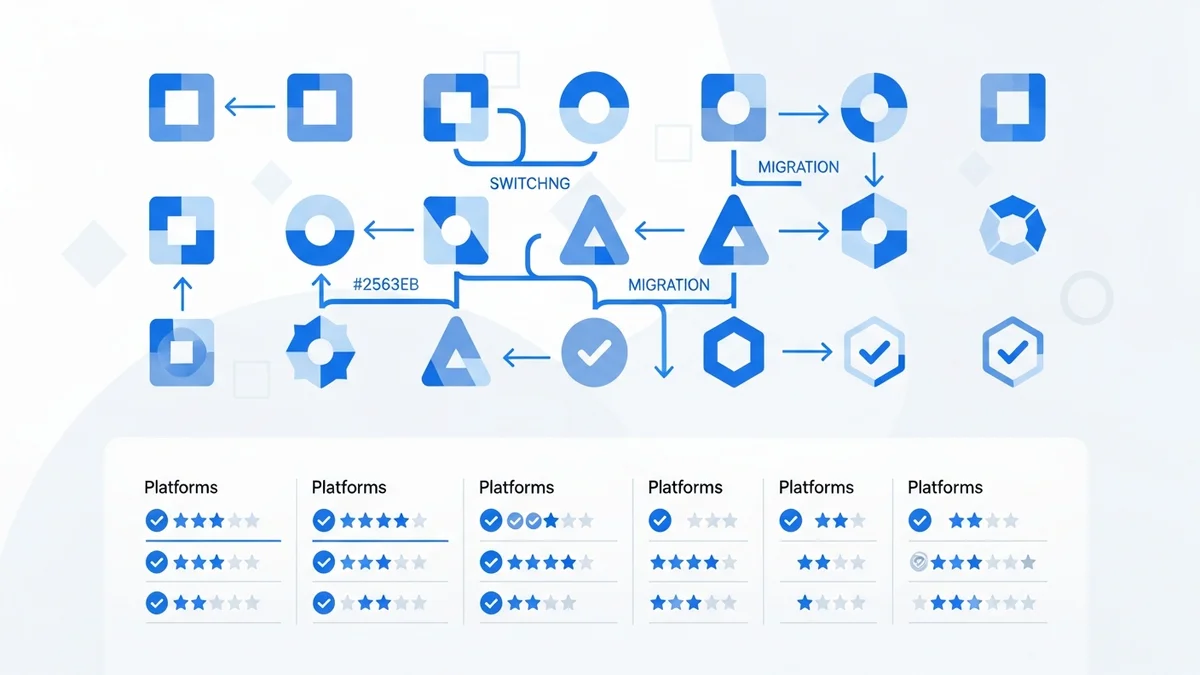

Marketing Automation Tools Built for Serious Testing

The platform you use to run tests matters enormously. Some tools bolt on A/B testing as an afterthought — you get subject line splits and nothing else. Others give you workflow-level experimentation, audience holdouts, and revenue-attributed results that connect directly to CRM data. Here's how the major platforms stack up for test marketing specifically.

| Platform | Test Types Supported | Revenue Attribution | Starting Price (per month) | Best For |

|---|---|---|---|---|

| HubSpot Marketing Hub | A/B (email, landing pages, CTAs), multivariate landing pages | Full CRM-connected revenue attribution | $800 (Professional) | Mid-market teams needing end-to-end attribution |

| ActiveCampaign | A/B split testing for emails and automation workflows | Deal revenue via CRM integration | $29 (Plus) | SMBs running high-volume email experiments |

| Klaviyo | A/B testing for email and SMS, predictive send-time testing | Native e-commerce revenue attribution | $20 (Email, up to 500 contacts) | E-commerce brands testing revenue-per-send improvements |

| Mailchimp | A/B (Essentials+), multivariate (Standard+) | Basic e-commerce revenue tracking | $20 (Essentials) / $35 (Standard) | Early-stage teams needing accessible entry-level testing |

| Customer.io | A/B testing across email, push, SMS, in-app messages | Event-based conversion attribution | $100 (Essentials) | SaaS and product-led teams testing lifecycle messaging |

| Marketo Engage | A/B, multivariate, champion/challenger programs | Full Salesforce CRM revenue attribution | $1,195 (Growth) | Enterprise teams running complex multi-channel tests |

| GetResponse | A/B testing for email, landing pages, subject lines | Basic conversion tracking | $19 (Email Marketing) | Budget-conscious teams needing reliable email testing |

The tool that deserves the most attention for serious test marketing in 2026 is Customer.io. Its event-driven architecture means you can build tests around actual product behaviors — not just email opens — which produces much stronger signal about what messaging actually changes user actions. For e-commerce, Klaviyo's native revenue attribution makes it nearly impossible for leadership to question whether a test winner actually moved the revenue needle.

Reading Results and Making the Call to Scale

The moment a test concludes — or hits statistical significance — most teams make one of two errors. They either over-interpret a small win ("subject line A outperformed B by 3% opens, so we should rewrite all our subject lines") or they dismiss a result because it didn't meet their prior expectations. Neither instinct serves the business.

Statistical Significance vs. Business Significance

A result can be statistically significant — meaning it's unlikely to be random — without being business significant. If a landing page headline test produces a 0.2% improvement in conversion rate on a page that generates 50 leads per month, the practical impact on revenue is trivial. Statistical significance tells you the result is real. Business significance tells you whether acting on it is worth the cost of implementation. Always calculate the dollar value of the improvement before deciding whether to roll out a test winner at scale.

Conversely, a test that doesn't reach significance isn't necessarily a failure. A null result on a high-priority hypothesis is valuable information — it tells you the variable you tested doesn't move the needle for your audience, which prevents you from wasting future resources optimizing it. Document null results with the same rigor you apply to wins.

Building a Structured 2026 Testing Calendar

The teams who extracted the most value from testing in 2025 weren't running ad hoc experiments — they were operating structured testing programs with quarterly roadmaps. A testing calendar allocates experiments by channel, defines test start and end dates, assigns ownership, and tracks the cumulative lift across the year. This structure also prevents the common problem of running overlapping tests that contaminate each other's results.

The SMART goal framework applies directly here. If your 2026 goal is to increase qualified leads by 20%, work backward from your 2025 conversion rates across channels to identify exactly which conversion points — if improved by which percentage — would achieve that outcome. Then build your testing roadmap around those specific conversion points, in order of potential impact. This turns a vague annual target into a concrete testing agenda.

The Most Common Test Marketing Mistakes in 2026

Given the stakes — defending budgets to skeptical leadership, operating under tighter privacy constraints, and competing in saturated channels — the cost of poorly designed tests is higher than it's ever been. These are the failures that appear most frequently, and how to avoid them.

Testing Too Many Variables at Once

The fastest way to destroy the learning value of a test is to change multiple elements simultaneously and then try to attribute the result to any one of them. This is more common with teams under time pressure — they want to "test everything at once to save time." In practice, they learn nothing they can act on with confidence. Enforce a one-variable-per-test rule for any test where the goal is learning, not just short-term performance lift.

Ignoring Audience Segmentation in Test Design

A message variant that outperforms for new prospects may actively hurt conversion for returning customers. Pooling these audiences into a single test produces an average result that's accurate for no one. In 2026, with platforms like ActiveCampaign offering sophisticated audience segmentation built into automation workflows, there's no technical reason to run unsegmented tests on heterogeneous audiences. Segment before you test, then validate whether winners hold across segments before scaling.

Stopping Tests Early After a Positive Result

Peeking at results mid-test and stopping when you see a winning variant is one of the most statistically damaging habits in digital marketing. Early results in any test are volatile — a 15% lift on day three often disappears by day ten as the novelty effect wears off and you reach a less engaged segment of your audience. Commit to your predetermined minimum duration before making any scaling decision, regardless of how strong the early data looks.

Failing to Document and Share Learnings

The compounding value of a test marketing program comes from institutional learning — the accumulated understanding of what your specific audience responds to, across channels and over time. Teams that run tests without a formal documentation process lose that value every time someone leaves the company or the context shifts. Build a simple shared log: hypothesis, test design, results, business impact, decision taken, and rationale. Six months of that log is worth more than any individual test result.

Building a Test Marketing Culture for Long-Term Growth

The most important shift in 2026 isn't methodological — it's cultural. Test marketing only compounds in value when it's treated as a continuous operating discipline rather than a one-time project. The organizations that entered 2025 with mature testing programs were the ones best equipped to respond to the ROI scrutiny, the attribution challenges, and the rapid shifts in channel performance that defined last year.

Start by allocating a fixed percentage of your marketing budget — most practitioners recommend 10–15% — specifically for experimentation, separate from performance campaign spend. This ring-fenced budget removes the political friction of justifying individual tests against short-term revenue targets. It signals to the team that learning is a strategic objective, not a luxury. And it gives you the operational freedom to test ideas that won't pay off in Q1 but could reshape your entire acquisition model by Q3.

The data exists. The tools exist. The frameworks exist. In 2026, the competitive differentiator is execution — the discipline to test before you scale, measure before you conclude, and document before you move on.